In January 2026, a class action lawsuit fundamentally shifted how organisations need to think about AI hiring tools for FCRA compliance. Not because it proved algorithms are biased, but because it argues they don’t need to be biased to be unlawful.

The case redefining AI hiring risk

In Kistler et al. v. Eightfold AI Inc., plaintiffs allege that personal data on over one billion workers was collected, analysed, and used to score candidates on a 0 to 5 scale, derived from aggregated CV data, inferred skills, career history, and other profile signals.

Candidates with lower scores were filtered out before any human reviewed their application.

The critical detail is this: the claim is not about discrimination. It is about opacity.

What FCRA actually means for AI hiring now

The Fair Credit Reporting Act (FCRA) is a US data rights law that’s suddenly very relevant to AI hiring. It was introduced in 1970 to regulate credit checks, but the core principle is what matters today: if a system collects data on someone, evaluates them, and that output is used to make a decision about them, they have a right to know, see it, and challenge it. Modern AI hiring tools are effectively doing exactly that. They aggregate candidate data, analyse it, and generate scores or recommendations that determine who progresses. Under FCRA, that starts to look less like software and more like a decision system that requires transparency and accountability. The law has not changed. Hiring technology has.

If AI hiring tools fall within FCRA, employers must disclose how data is used, obtain candidate consent, provide access to evaluations, and allow individuals to dispute decisions. Most current systems do none of this. The Eightfold case tests whether AI hiring tools are effectively operating as hidden decision engines without meeting these long-standing requirements.

The shift from bias to process

For years, the industry has focused on whether AI hiring tools produce biased outcomes. This case reframes the issue entirely. It asks a simpler, more dangerous question: are employers making decisions using systems candidates cannot see, understand, or challenge? If the answer is yes, compliance risk exists regardless of whether bias can be proven.

The growing liability squeeze on employers

Alongside Mobley v. Workday, which established that vendors can act as agents of employers, this creates a dual pressure point. One case targets outcomes, the other targets process. Meanwhile, industry data shows that 88% of AI vendors cap liability, often to subscription fees, while only 17% warrant regulatory compliance. This leaves employers exposed. AI hiring tools may scrape unknown data sources, apply opaque scoring logic, and filter candidates automatically, yet the legal risk sits with the organisation using them.

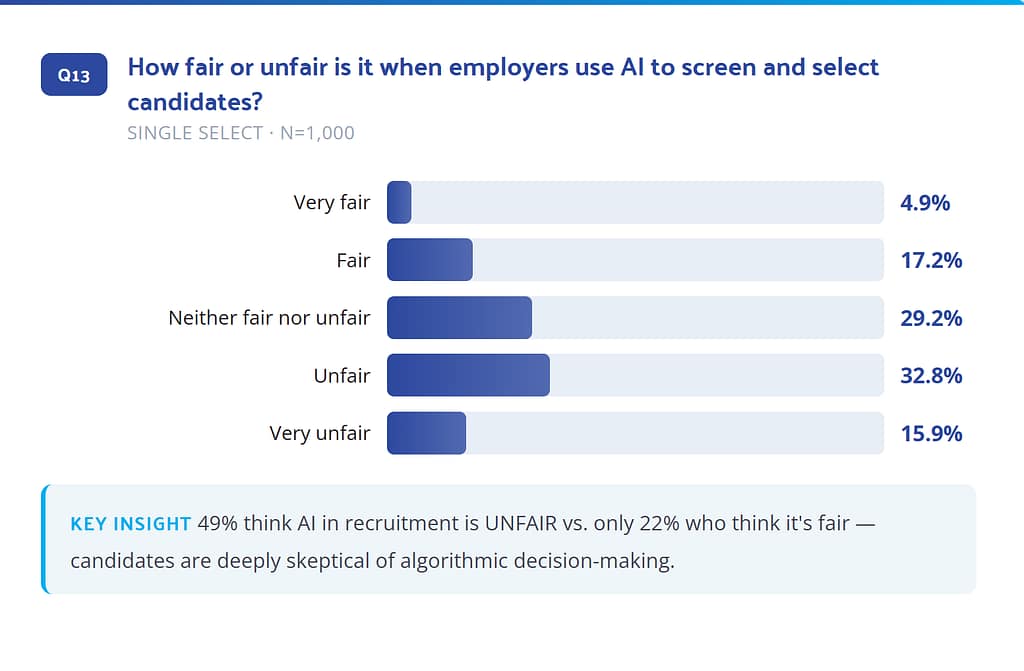

Candidates already distrust AI screening

This legal shift aligns with candidate sentiment. ThriveMap’s 2026 research shows that 49% of candidates believe AI screening is unfair, 72% have accepted jobs that were misrepresented, and 60% of those left sooner than planned. This is not just a compliance issue. It is a trust failure.

[Access the full data here: https://hubs.la/Q04dbnw_0]

Treating this as a legal checkbox misses the point.

Opaque filtering systems do not just create regulatory exposure. They produce weaker hiring outcomes. Filtering candidates without transparency leads to poor alignment between candidate and role, reduced trust in the process, and higher early attrition. These are the same problems organisations are trying to solve.

What compliant AI hiring now requires

If AI hiring tools fall under FCRA, three capabilities become essential: clear explanation of how decisions are made, meaningful human oversight before rejection, and candidate visibility into how they were evaluated. Most current systems cannot deliver this.

Where ThriveMap stands

This shift exposes a fundamental divide in hiring technology. Many AI hiring tools operate as black boxes, scoring and filtering candidates silently. ThriveMap takes a different approach. Assessments are based on real job scenarios rather than abstract scoring, automated in delivery but human-reviewed in decision-making, and transparent in what is being assessed and why. As Chris Platts, CEO of ThriveMap, puts it: “The problem isn’t AI in hiring. It’s invisible AI. If a candidate can’t understand how they’re being evaluated, you’re not just creating legal risk, you’re making worse hiring decisions.”

The shift from screening to understanding

Hiring has been optimised for speed and volume. AI accelerated that. But hiring failures do not happen at the top of the funnel. They happen after the hire, when expectations and reality diverge. The organisations that adapt will move from filtering candidates out to understanding them in context.

The Eightfold case signals a structural shift. If AI hiring tools influence decisions, they may need to meet the same standards as consumer reporting systems. That means transparency, accountability, and human oversight are no longer optional. Organisations that cannot explain how their hiring process works are now exposed, both legally and commercially.

ThriveMap: Human-reviewed, intelligently automated, built to close the expectation gap and reduce early attrition. Get started now: https://thrivemap.io/get-started-now/